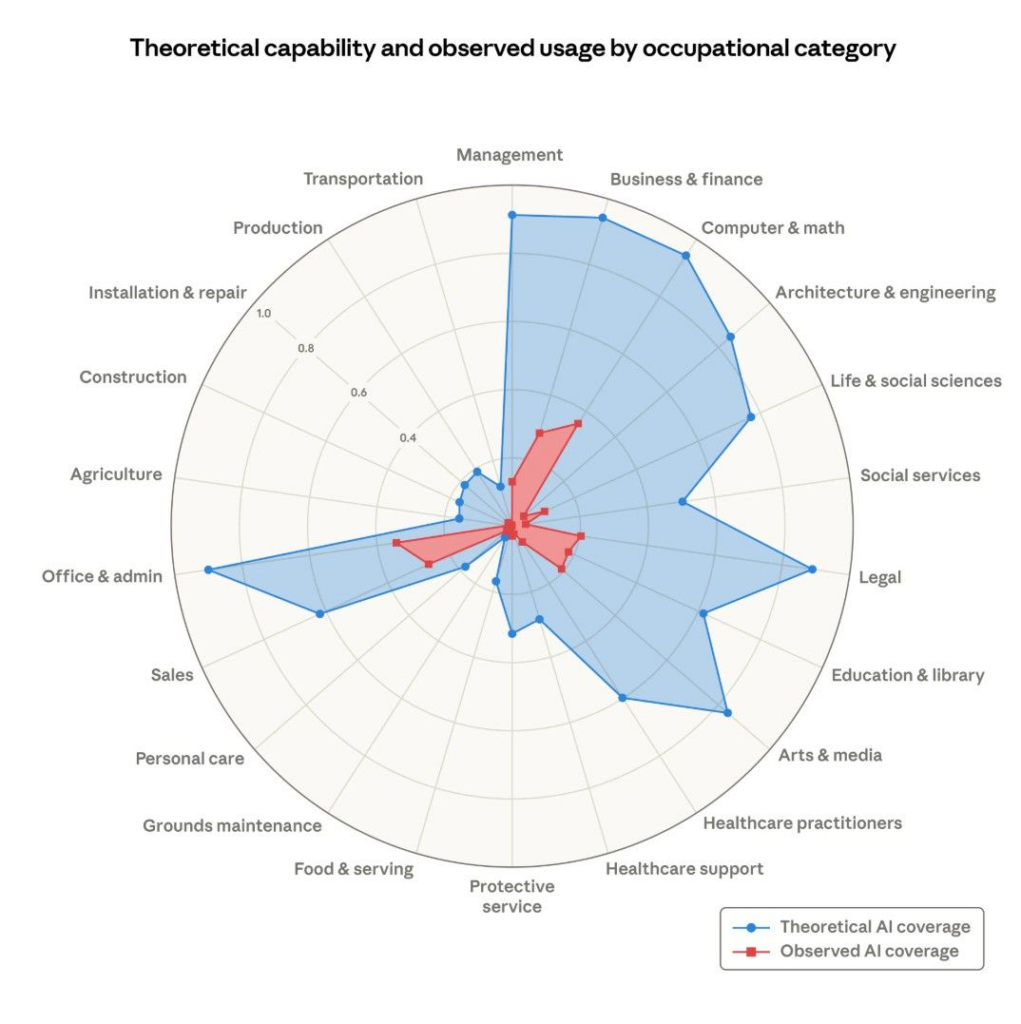

Anthropic’s new report says there is a gap between what AI could do and what it currently does in real work. The report calls this current use “observed exposure.” It combines theoretical LLM capability with real Claude usage data, giving more weight to work-related and automated uses. Its main finding is that actual AI use still accounts for only a small fraction of what the technology could theoretically handle.

That sounds like a neutral finding, but the report goes a step further. It says that as AI improves and deployment deepens, current use will grow toward the larger theoretical capability. Anthropic is not only measuring a gap. It is also suggesting that the gap will, and perhaps should, close over time.

That reading is too simple. In many fields where AI use remains limited, the problem is not slow adoption. It is a responsibility. Anthropic itself admits that actual use may fall short of theoretical capability due to legal constraints, software requirements, and human verification. In law, medicine, finance, auditing, and engineering, these are not minor barriers. They are the core rules of the profession.

A doctor, lawyer, auditor, or pharmacist does not just produce information. These professionals are trained, certified, and legally accountable for their decisions. They can be sued, sanctioned, or have their license revoked. That is very different from asking AI to draft code or summarize a document. When Anthropic treats the gap between potential and actual use as space waiting to be filled, it risks confusing professional safeguards with a missed technological opportunity.

Some of the empty spaces in the chart may not signal that AI has not advanced far enough. It may signal that certain institutions still insist important decisions remain tied to human judgment and legal responsibility. In those settings, slower AI adoption is not a sign of backwardness. It may be a safeguard. And that safeguard matters, because capability alone does not answer the hardest question: who is accountable when the system gets it wrong?

No responses yet